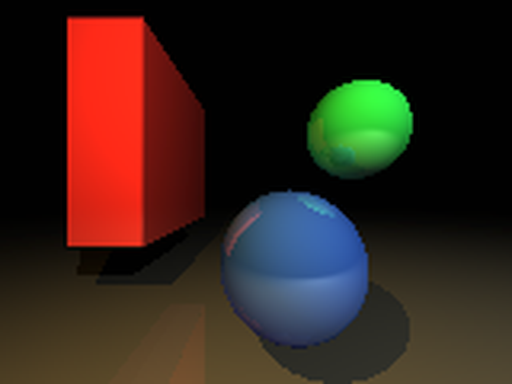

Kajiya renderer

💡 Experimental real-time global illumination... toy 🦀

Done in my spare time at Embark, kajiya is an evolving excursion into real-time global illumination algorithms. Having gone through surfels and morphological inverse cone tracing (🤪), it's now fully infused with ReSTIR and irradiance caching.

Permissively licensed on GitHub.